Method

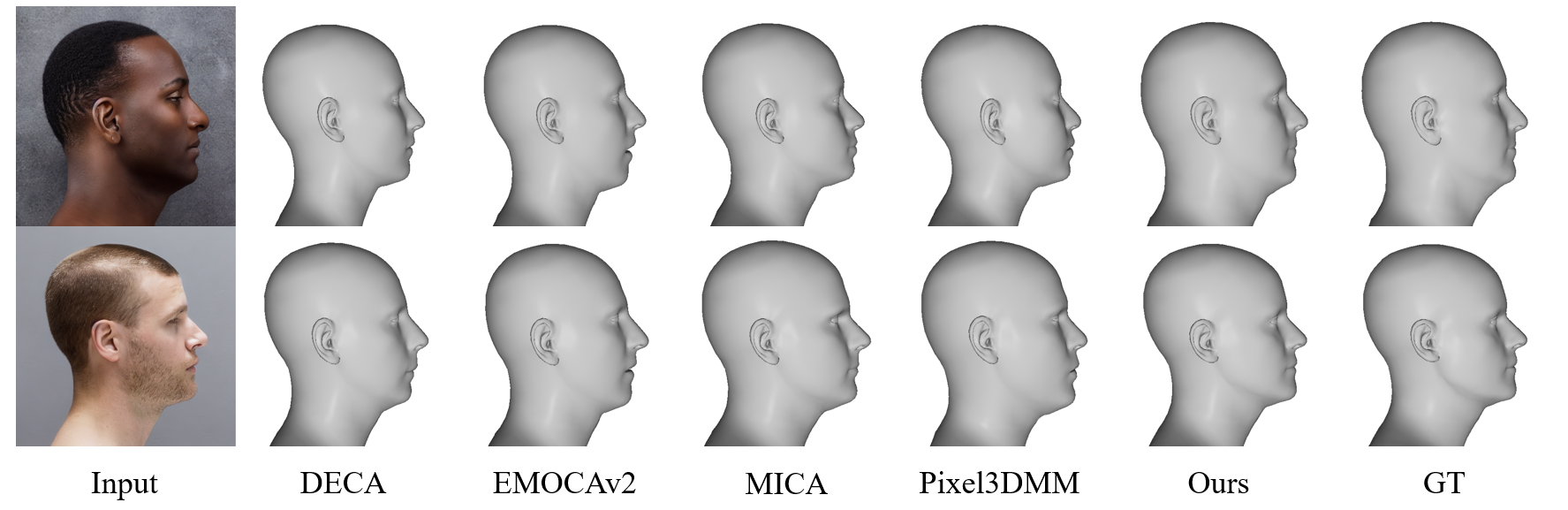

The project is organized as a profile-focused benchmark and baseline. ProfileSynth supplies paired RGB images and exact FLAME labels for strict lateral views, while Profile3DMM keeps the regressor intentionally simple so that the effects of profile-specific data and evaluation can be studied directly.

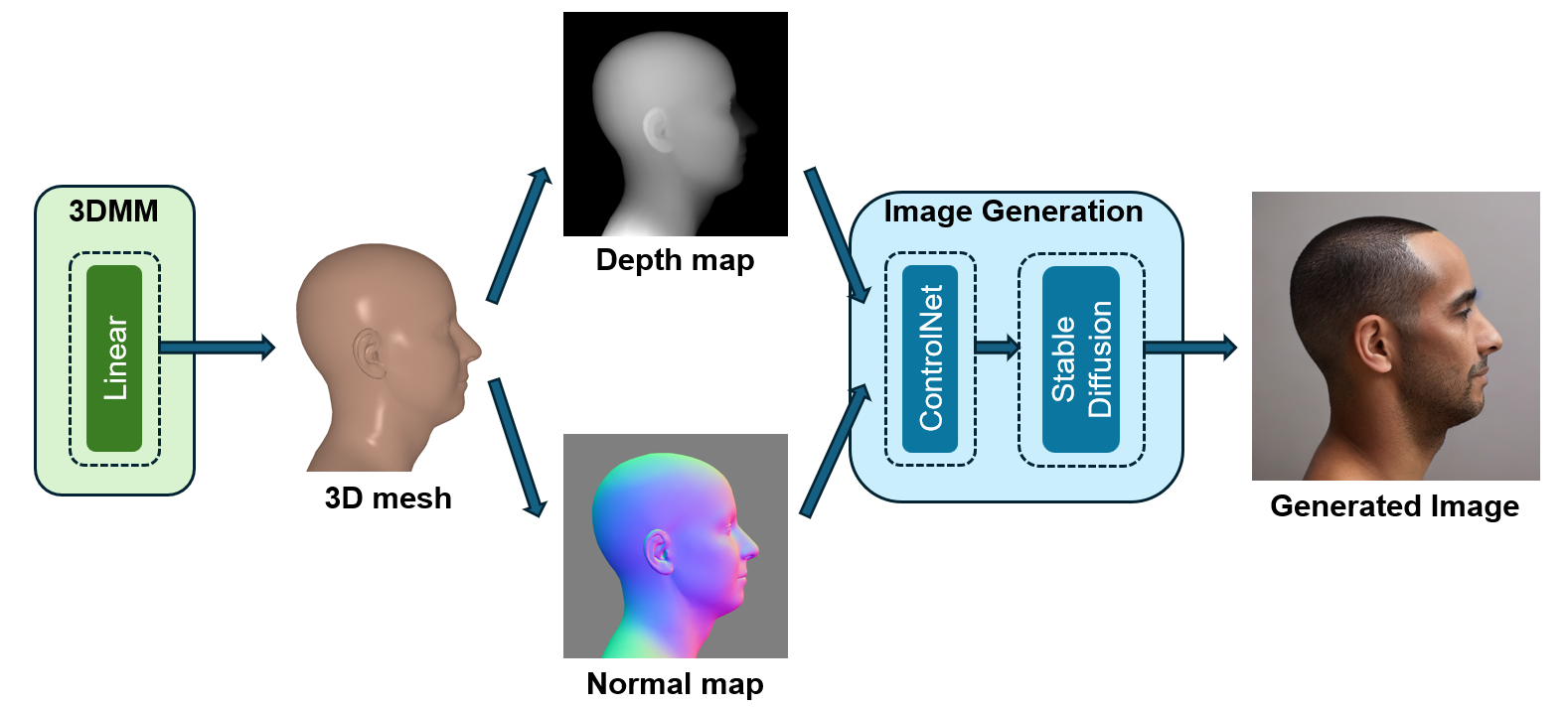

ProfileSynth

ProfileSynth contains 100,000 synthetic profile samples with yaw in the 85-95 degree range. It samples FLAME shape and pose parameters, renders geometry cues including depth, normals, silhouettes, and landmarks, and uses geometry-conditioned image generation to synthesize photorealistic profile RGB images with known 3D labels.

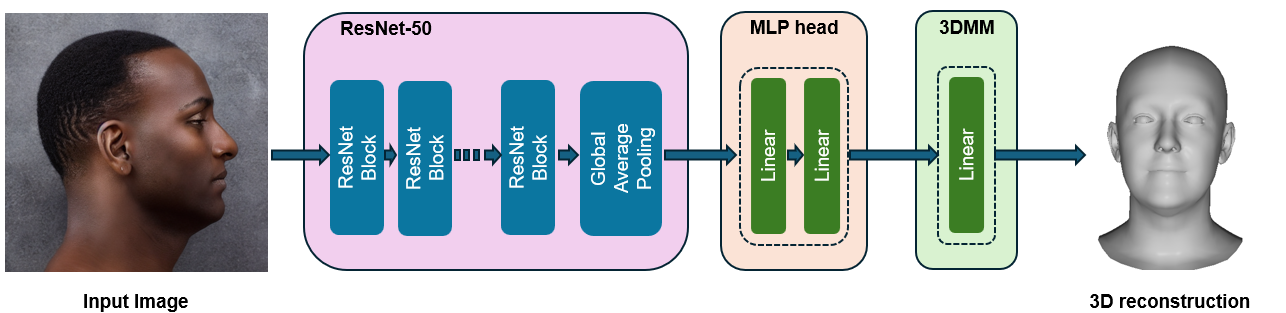

Profile3DMM

The baseline uses an ImageNet-pretrained ResNet-50 and an MLP head to regress FLAME shape and head pose. It is designed as a compact reference model for the complete lateral setting, rather than a new architecture-heavy reconstruction system.